Christine Perey sent some good news around to the AR Standards mailing lists a couple of days ago: First mobile AR browser interoperability demonstration to take place Feb 25:

It’s official!

The results of a collaborative effort involving metaio, Layar and Wikitude based on a jointly-defined architecture, a Common AR Interchange Format (CARIF) based on candidate OGC standard ARML 2.0 and an AR Launch URL scheme, will be publicly demonstrated on February 25.

The AR Community’s browser interoperability engineering group’s proof of concept “custom browser” implementations will be demonstrated as part of the OGC “Location Standards for a Mobile World” seminar and reception at the ICC in Barcelona.

When using one of these custom AR browsers, content that was originally authored to work using one of the other AR browsers based on geospatial coordinates will load and support pre-defined functions.

…

For more information about the architecture: http://www.perey.com/ARStandards/AR_Browser_Interoperability_Architecture_Jan_21_2014_v1_2.pdf

The announcement was also picked up by Bruce Sterling and posted on his blog: http://www.wired.com/beyond_the_beyond/2014/02/augmented-reality-interoperability-demo/

Congratulations to metaio, Layar and Wikitude!

Congratulations, indeed. This is really good news and a great step towards easier and wider adoption of augmented reality.

The Open Geospatial Consortium has an announcement too: OGC, Layar, Metaio and Wikitude invite Mobile World Congress attendees to AR Interoperability Demo, which says in part:

The common AR interchange format that enables this AR interoperability is based on the candidate OGC ARML 2.0 Encoding Standard that Martin Lechner of Wikitude introduced into the OGC, with the goal to provide an interchange format for Augmented Reality. After it has been successfully tested in the interoperability experiment, ARML 2.0 will be reviewed by the OGC membership to become an adopted OGC standard within the next couple of months. The companies demonstrating AR interoperability believe tomorrow’s AR market will be much more open, and thus much larger, than today’s AR market. Today, a user equipped with an AR-ready device, including sensors and appropriate output/display support, must download a proprietary application to experience content published using an AR experience authoring platform. A subset of these applications are referred to as “AR browsers.” AR browser interoperability benefits at least these four stakeholder groups:

- Content Publishers will be able to offer AR experiences with their content to larger potential audiences (e.g., all users of AR browsers that support interoperability) with equal or lower effort (costs) of preparing/producing AR browser-based experiences with their digital assets,

- Developers of AR experiences will be able to choose the AR experience authoring environment they prefer or is best suited to a project without sacrificing the “basic” experience they can offer their clients’ target audiences and also be able to invest in innovation (specialize) in preparation of highly engaging and interactive experiences,

- Attracted by larger total audience size and lower barrier to entry, there will be more content publishers willing to invest in AR and greater number of developers learning/perfecting AR experience design, generating higher revenues for AR authoring and content management system providers, and

- End users will be able to discover and select AR experiences from a larger catalog while also choosing the AR browser they prefer.

Key sentence there: “After it has been successfully tested in the interoperability experiment, ARML 2.0 will be reviewed by the OGC membership to become an adopted OGC standard within the next couple of months.”

As the ARML 2.0 Standards Working Group puts it:

The ultimate goal of ARML 2.0 is to provide an extensible standard and framework for AR applications to serve the AR use cases currently used or developed. With AR, many different standards and computational areas developed in different working groups come together. ARML 2.0 needs to be flexible enough to tie into other standards without actually having to adopt them, thus creating an AR-specific standard with connecting points to other widely used and AR-relevant standards.

It will be a major advance when this is settled and adopted.

I wrote a point of interest provider, Avoirdupois, that right now only works with Layar. It uses the Layar API to answer the correct Layar way when Layar asks, “Do you know know of any points of interest within 1500 meters of location (x, y)?” If you’re using Layar—in fact a particular layer (or channel) in Layar—and want to know what’s around you that you can see in an AR view, that’s great. It works just like it was supposed to.

But what if you’re using Wikitude? The Wikitude ARchitect specification defines a completely different way of doing things. I was going to look at Retrieving POI Data from a Web Service to see how Avoirdupois could support that.

And then Junaio does things still differently, so I was going to have to look at the developer documentation about location-based channels to see how Avoirdupois could handle that too.

Layar, Wikitude, Junaio … all have different ways of asking, “Is there anything of interest near location (x,y) that the user should see?” And all expect different answers. ARML 2.0 will mean there’s just one kind of answer, and all of the AR applications can use it.

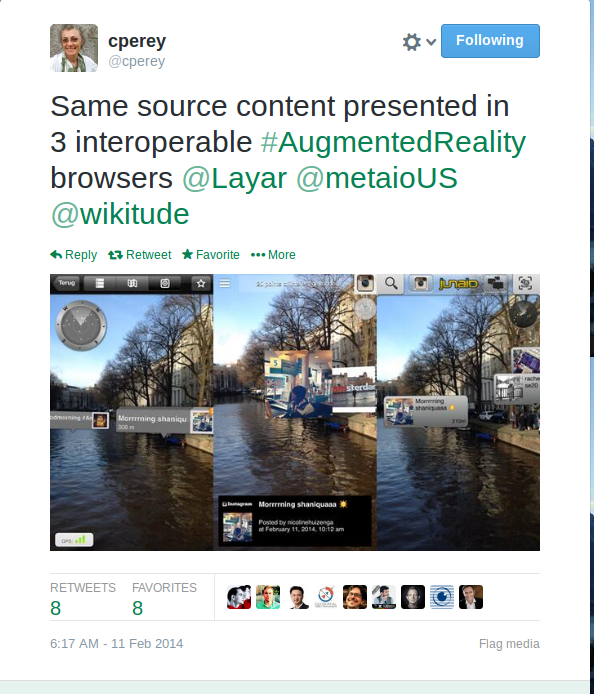

Here’s Christine Perey’s tweet showing the test in action:

When ARML 2.0 is defined then I’ll work to have Avoirdupois use it.

And when there’s an easy way for awe.js to ingest it, and you can easily see standards-based AR in your mobile browser, everything will get much easier and even more exciting.

Miskatonic University Press

Miskatonic University Press